Shadow AI Definition

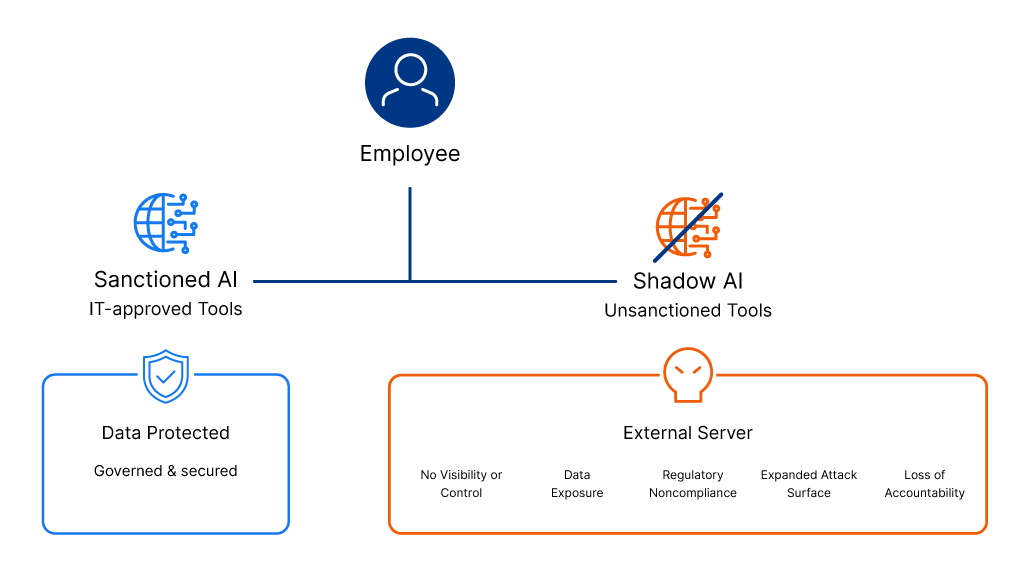

Shadow AI is the use of artificial intelligence tools, applications, or systems by employees or users without the explicit authorization, knowledge, or oversight of an organization’s IT or security teams. Shadow AI encompasses any AI deployment – including generative AI applications, machine learning models, AI-powered features within approved software, and AI agents that operates within existing tools and platforms, often through sanctioned channels but without explicit authorization, visibility, or governance oversight, creating security and compliance risks organizations aren’t equipped to track or control.

Shadow AI FAQs

What is Shadow AI?

Shadow AI represents one of the fastest-growing security challenges facing modern enterprises as artificial intelligence adoption accelerates. When employees use ChatGPT to draft customer emails, upload proprietary code to GitHub Copilot for debugging assistance, process financial data through Claude for analysis, or leverage AI features embedded within approved SaaS applications without security review, they create shadow AI deployments that IT and security teams cannot see, monitor, or protect. These unsanctioned AI tools operate outside established security governance frameworks, bypass security controls, and process sensitive data through external systems where organizations have no visibility into how information is stored, whether it’s used for model training, or if it’s adequately protected from unauthorized access.

The explosive growth of shadow AI stems from the unprecedented accessibility and ease of use that characterizes modern AI tools. Unlike traditional software that required installation or procurement approval, most AI applications are available instantly through web browsers, mobile apps, or as features quietly embedded within existing platforms. Employees turn to shadow AI not from malicious intent but from a genuine desire to enhance productivity, automate repetitive tasks, and solve problems more efficiently than sanctioned tools allow, each action seeming harmless in isolation but collectively creating massive security exposure that organizations struggle to detect and control.

What Are the Risks of Shadow AI?

Shadow AI risks extend far beyond simple policy violations, creating vulnerabilities that threaten data security, regulatory compliance, and organizational reputation in ways that traditional shadow IT never could. Not all shadow AI represents a deliberate risk as employees often adopt AI capabilities embedded in familiar tools to get work done faster. But without visibility into what’s running and what it can access, organizations can’t distinguish between benign usage and activity that exposes sensitive data, creates compliance liabilities, or expands the attack surface.

Organizational Risks of Shadow AI

Data Breaches and Sensitive Information Exposure

The most immediate shadow AI risk involves unintentional data leakage when employees input confidential information into external AI platforms. Customer records, proprietary source code, financial data, strategic business plans, employee information, and intellectual property can be exposed when processed through unsanctioned AI tools – whether the provider stores inputs on their own servers, uses submitted data to train future model versions, or passes sensitive details through to other users.

Regulatory Noncompliance and Legal Liability

Shadow AI creates significant compliance vulnerabilities when AI processing contradicts data protection regulations and industry-specific mandates. Organizations subject to GDPR, HIPAA, CCPA, PCI-DSS, or sector regulations may face substantial fines when shadow AI handles regulated data without proper controls, audit trails, or data processing agreements. GDPR violations alone can result in penalties up to €20 million or 4% of global annual revenue for unlawful data processing.

Expanded Attack Surface and Security Vulnerabilities

Shadow AI introduces potential entry points for threat actors and expands the organization’s attack surface beyond what security teams can defend. Unsanctioned AI applications may contain unpatched vulnerabilities, insecure APIs, weak authentication mechanisms, or connections to malicious infrastructure that attackers exploit to gain initial access or move laterally through networks. This also creates risks through overprivileged integrations, exposed API keys in code repositories, compromised third-party models that have been poisoned or manipulated, and personal device access that bypasses corporate security controls. Organizations cannot apply security patches, enforce multi-factor authentication, monitor for suspicious activity, or revoke access to shadow AI tools they don’t know exist, leaving critical gaps in their security posture.

Loss of Accountability and Governance

Shadow AI fundamentally undermines organizational ability to maintain accountability for AI-generated decisions and outputs. When employees rely on unauthorized AI models to analyze data, generate content, or make recommendations, organizations cannot verify the accuracy of results, trace the source of errors, audit decision-making processes, or determine liability when AI outputs cause business harm or reputational damage. Biased training data, model drift, hallucinations, and manipulated outputs can lead to poor strategic choices, discriminatory practices, customer service failures, or public relations disasters, all without the organization having any audit trail to understand what happened or who was responsible. The absence of governance means companies cannot enforce ethical AI use, prevent harmful applications, or demonstrate responsible AI practices to stakeholders, customers, or regulators.

What is the Difference Between Shadow AI and Shadow IT?

Shadow IT and Shadow AI both involve technology used without formal IT approval, but they create different types of risk. Shadow IT refers to any unauthorized software, cloud service, or device used inside an organization, such as employees storing work files in Dropbox or Google Drive, or adopting tools like Trello without review. The primary risks center on visibility gaps, unmanaged access, and data governance issues. Shadow AI, by contrast, involves unauthorized use of artificial intelligence tools such as ChatGPT or Claude, or unsanctioned machine learning deployments. The key difference is that AI systems don’t just store or transmit data, they analyze, transform, and may retain or learn from it. This creates additional risks like prompt data exposure, inference attacks, and uncontrolled automated decision-making. While Shadow IT is largely an access and infrastructure problem, Shadow AI introduces AI-specific data processing and model governance risks that traditional security controls often cannot detect or manage.

How to Detect Shadow AI

Detecting shadow AI requires a fundamentally different approach than traditional shadow IT discovery because AI tools often operate through standard web browsers, embed themselves within approved applications, or manifest as features rather than standalone software installations. Organizations need shadow AI detection capabilities that go beyond what conventional security tools were designed to catch. Existing solutions — whether network monitoring, endpoint detection, DLP, or CASB — were built for different threat models and lack the context to identify AI-specific activity patterns. Effective shadow AI discovery combines multiple detection methods to create comprehensive visibility across the AI attack surface.

Network Traffic Analysis and API Monitoring

Shadow AI detection tools should continuously monitor network traffic for connections to known AI platforms, APIs, and model endpoints that indicate unauthorized AI usage. Organizations can identify when employees access public AI services like OpenAI, Anthropic Claude, Google Gemini, Hugging Face, or other AI providers by analyzing DNS queries, HTTPS traffic metadata, and API call patterns that signal / match AI interaction signatures. Advanced monitoring examines outbound connections for characteristic AI traffic including large data transfers to AI platforms, frequent API calls with JSON payloads typical of AI requests, WebSocket connections used by real-time AI chatbots, and authentication tokens for AI services appearing in network logs. This network-level shadow AI discovery reveals both obvious usage through consumer AI websites and more sophisticated deployments where developers integrate external AI APIs into internal applications without approval.

Endpoint and Browser Extension Monitoring

Organizations must deploy endpoint detection capabilities that identify AI tools installed on user devices or operating through browser extensions and web applications. Shadow AI detection at the endpoint level captures locally-installed AI models, browser extensions that add AI features to web applications, desktop AI applications downloaded outside official channels, and development environments configured with AI capabilities. Browser activity monitoring specifically tracks visits to AI platform websites, prompts entered into web-based AI chatbots, file uploads to AI services, and use of AI features within web applications, providing visibility into the most common vector for shadow AI adoption. Endpoint agents can also detect when users run AI models locally, access AI services through virtual private networks to evade corporate controls, or use personal devices for AI-powered work tasks.

SaaS Application Discovery and AI Feature Auditing

A critical but often overlooked aspect of shadow AI detection involves auditing approved SaaS applications for AI features that emerged after initial security reviews. Many enterprise platforms now embed AI capabilities without requiring separate procurement or triggering traditional shadow IT alerts. Organizations need shadow AI detection tools that continuously inventory sanctioned applications, identify when vendors add AI functionality through updates, flag new AI features that lack security approval, and detect when employees enable or use these capabilities without IT knowledge. This application-level monitoring should also track third-party AI integrations, browser plug-ins that add AI to existing tools, and OAuth connections granting AI services access to corporate data repositories.

Data Loss Prevention and Prompt Analysis

Data loss prevention (DLP) technologies adapt for AI-specific risks by monitoring the content employees submit to AI platforms and the outputs they receive. DLP systems configured for AI security can detect when users paste sensitive information (SSNs, credit card numbers, AWS secrets) into web forms associated with AI chatbots, upload files containing proprietary data to AI processing services, copy-paste confidential content into browser-based AI tools, or receive AI-generated outputs that include protected information.

How to Prevent Shadow AI

Preventing shadow AI requires a balanced approach that acknowledges why employees turn to unsanctioned AI tools while establishing governance frameworks, security controls, and cultural practices that guide safe and approved AI adoption. Sustainable prevention depends on more than detection as organizations need to shift internal culture, provide employees with approved AI alternatives that meet their productivity needs, and build genuine understanding of both the value and the risks of AI technologies.

Key Shadow AI Prevention Strategies:

Establish clear AI governance and acceptable use policies – Develop documented policies that define approved AI platforms, specify what data types can be processed through AI systems, establish role-based permissions, and outline approval processes for new AI tools. Communicate these policies clearly through onboarding, training, and accessible documentation to ensure every employee understands boundaries and knows how to work within them.

Provide sanctioned AI alternatives and streamlined approval – How companies should respond to shadow AI fundamentally involves offering approved alternatives that match public AI platform capabilities while maintaining enterprise security controls. Implement a request process for new AI tools with evaluation timelines measured in days rather than months, and deploy enterprise AI solutions that operate within your organization’s existing governance frameworks, data boundaries, and access controls, giving employees approved ways to work with AI without relying on unsanctioned tools.

Invest in AI usage training and security awareness – What role does change management play in eliminating shadow AI extends to ongoing education through real-world examples showing data breaches and compliance violations caused by unsanctioned AI usage. Create internal AI champions who model proper usage, establish feedback channels for requesting new capabilities, and frame AI governance as enablement rather than restriction.

Conduct continuous discovery and policy evolution – Shadow AI security requires regular audits to assess whether approved AI tools adequately meet employee needs, quarterly policy reviews to reflect new AI capabilities and emerging risks, and ongoing dialogue with business units to understand their AI requirements. Treat AI governance as a dynamic program that adapts to new shadow AI vectors, expands approved tool portfolios to cover legitimate use cases, and evolves with changing business needs and regulatory requirements.

Securing Shadow AI in SaaS Environments

AppOmni addresses shadow AI security challenges by extending comprehensive SaaS Security Posture Management (SSPM) capabilities to include AI-powered platforms, embedded copilots, and autonomous agents operating within enterprise SaaS environments. The AppOmni platform discovers shadow AI deployments across both standalone AI applications like ChatGPT, Claude, and DeepSeek, as well as AI features embedded within sanctioned SaaS platforms such as Microsoft Copilot Studio and ServiceNow Now Assist agents. By treating AI agents as non-human identities and applying Zero Trust principles, AppOmni provides visibility into AI configurations and monitors AI prompt activity to prevent sensitive data exposure, transforming shadow AI from an invisible security gap into a managed component of enterprise SaaS security posture.